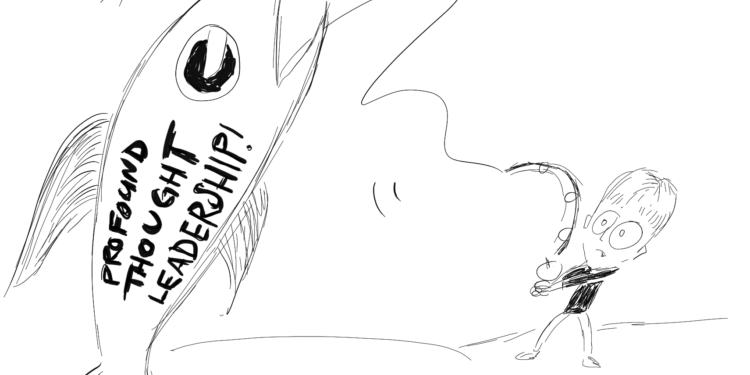

Identifying Good Search Visibility (SEO/GEO) Clients

Editorial note: I originally posted this over on Hit Subscribe. It’s the first in a series there I’m calling “The Agency Path” which is going to be aimed at freelancers and small agencies, often in SEO/marketing, but with some broadly applicable topics. So I’m back to writing about freelancing for freelancers, which is a lot…