The Death of the Obligatory Comment

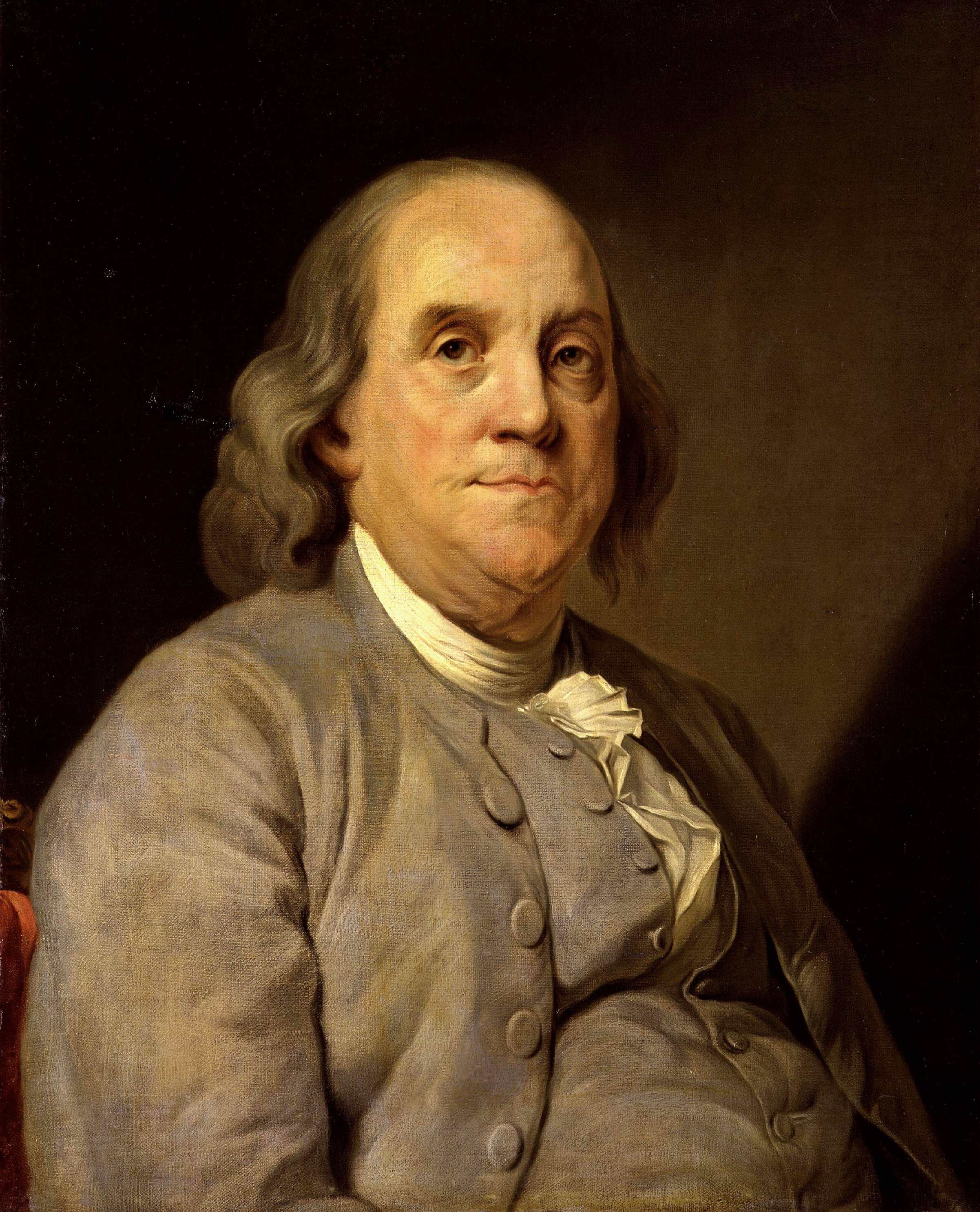

The Ben Franklin Effect

Have you ever started looking for something, let’s say your wallet, and been fairly certain that it wasn’t in your house? You started looking in all of the most likely places and progressed with increasing glumness to less and less likely landing spots. Perhaps you eventually wound up in the crawl space that you hadn’t entered since six months prior to losing your wallet. If you’ve had an experience like that, do you recall a point at which you thought to yourself, “this is completely pointless” and yet you kept doing it anyway?

Even if you can’t recall an experience like this, I imagine that you’ve seen similar stories unfold in countless other places. In politics and government for example, can you count the number of times some matter of policy has proven to be an obvious mistake and it keeps going anyway like an unstoppable juggernaut with resolute phrases like “stay the course” uttered as sober non sequitur? In the end it all seems silly; it seems to be a way to say “we know this is a mistake and we’re going to keep doing it anyway.”

I believe that this mystifying behavior has two main sources of persistence as a mainstay in the human condition. The first is that without careful consideration, we tend toward logical fallacy and this is an example of the fallacy “appeal to consequences” (aka “wishful thinking”). In other words, “I’m going to look for my wallet in the crawlspace because I believe it’s in there since it would be a real bummer if it weren’t.” We tend to double down on our mistakes because we really wish we hadn’t made them.

I believe that this mystifying behavior has two main sources of persistence as a mainstay in the human condition. The first is that without careful consideration, we tend toward logical fallacy and this is an example of the fallacy “appeal to consequences” (aka “wishful thinking”). In other words, “I’m going to look for my wallet in the crawlspace because I believe it’s in there since it would be a real bummer if it weren’t.” We tend to double down on our mistakes because we really wish we hadn’t made them.

The second source of this is a truly fascinating study of our motivations as humans for our actions and interactions. A blog I really like called “You Are Not So Smart” defines this as “the Ben Franklin Effect“. I highly recommend a start-to-finish read of this post, and will warn you here that my next paragraph will be a spoiler, so go read it, please.

Oh, hi there. You’re back? Cool! As you now know, the premise of the post is that Ben Franklin figured out a way to turn someone who didn’t like him (a “hater”) into an ally. He asked that person to do him a favor… and it worked. As it turns out, we tend to like people because we do them favors, rather than doing them favors because we like them. The reason for this is that we construct narratives about our motivations and preferences that rationalize our past decisions; “I must like that guy since otherwise me doing him a favor would have been stupid and since I’m obviously not stupid…” (for you rhetoric buffs, this is another logical fallacy called “begging the question”)

David McRaney puts this nicely in his post:

If you live in the Deep South you might buy a high-rise pickup and a set of truck nuts. If you live in San Francisco you might buy a Prius and a bike rack. Whatever are the easiest to obtain, loudest forms of the ideals you aspire to portray become the things you own, like bumper stickers signaling to the world you are in one group and not another. Those things then influence you to become the sort of person who owns them.

What Does this Have to Do With Code Comments?

I’m glad you asked. The answer is that for me, and I suspect for a lot of you, putting comment headers above all methods, classes, fields, etc is a doubling down on a behavior that probably makes no sense, a staying of the course, and a thing that we like doing because we did it.

When I was in college, I don’t think I ever commented my code. At that time, as I recall, we were young and naive and wore code unreadability on our sleeves as a badge of honor; anyone who could execute an entire complex looping sequence in the condition and increment statements of a for loop was a total ninja, after all. When I left school and went to the professional world, I was confronted with people who wrote various kinds of code, but one was a Linux kernel hacker that I truly respected and I noticed that he dutifully put comment blocks above all of his methods. Included in these were descriptions of what the method did, what it returned, what the arguments were, what invariants it preserved, and a journal log of edits made to it.

This was an epiphany for me. All of those guys with their non-commented, zany for loops were amateurs. Real programmers did that kind of ninja stuff and then put a nice, clear comment on top that explained to mere mortals how awesome they were. I was hooked. I wanted to be that guy and so I became a guy that wrote those kind of comments above methods and everywhere else. I didn’t do this halfway — I was the kind of guy that went the extra mile to thoroughly document everything.

Years, jobs and programming languages later, this practice persisted, long outlasting my opinion that it was a badge of honor to cram multiple execution statements into loop iterators and furrow people’s brows with inscrutable pointer arithmetic (having gravitated toward code that was clear, readable and maintainable). A funny thing started happening to me, though. I started reading blog posts like this one, where people said things like:

What makes comments so smelly in general? In some ways, they represent a violation of the DRY (Don’t Repeat Yourself) principle, espoused by the Pragmatic Programmers. You’ve written the code, now you have to write about the code.

…

First, where are comments indeed useful (and less smelly)? If you are writing an API, you need some level of generated documentation so that people can use your API. JavaDoc style comments do this job well because they are generated from code and have a fighting chance of staying in sync with the actual code. However, tests make much better documentation than comments. Comments always lie (maybe not now, but on a long enough timeline, all comments will become outdated). Tests can’t lie or they fail. When I’m looking at work in progress on projects, I always go to the tests first. The comments may or may not be there, but the tests define what’s now done and working.

The first few times I read or heard arguments like this, I dismissed them as the work of people too lazy to write comments. As I started reading more such posts… I simply stopped thinking about them. It was a silly argument — after all, I had spent countless hours throughout my career writing comments and I wouldn’t spend all that time doing something that might not be a good idea. A friend of mine likes to satirize this type of reasoning by saying “if the facts don’t fit the dogma, the facts must be discarded,” and while the validity of comments in code is truly a matter of opinion rather than fact, the same logic applies to my reasoning. I discarded an argument because not discarding it would have made me feel bad about prior decisions that I had made.

Nagging Doubts and Real Conclusions

In spite of my best efforts simply to ignore arguments that I didn’t like, I was plagued by cognitive dissonance on the matter. Why would otherwise rational and obviously quite intelligent people say such ridiculous things about my beloved exhaustive commenting? Determined to reconcile these divergent beliefs, I decided one day while documenting code smells on a wiki for a former employer that they didn’t really mean that comments were a code smell in general – they must only be talking about stupid comments like putting “//this is a for loop” above a for loop. Yeah, of course. That’s what they meant.

But, it wasn’t. In spite of myself, I had read and processed the arguments. I knew that comments weren’t DRY and that they represented duplication of the logic of the code. I knew that heavily commented code tended to mask poor naming choices and unintuitive abstractions. I knew that comments, even XML/Javadoc comments for public-facing APIs tended to start out helpful and accurate and then to rot over time as different people took over maintenance and didn’t think to change them along with the code. Heck, I’d experienced that one firsthand with inaccuracies and inconsistencies piling up as surely as they do in a non-normalized database.

So, eventually I was forced to see the light and admit that it was possible and even probable that my efforts had been a complete waste of time over the years. Sound harsh? Well, here’s the thing. How many of those comments that I wrote are still floating around in some code base somewhere, not deleted? And of that percentage, how many are accurate? I’m guessing the percentage is tiny — the comments are dust in the wind. And before they blew away, what are the odds that anyone cared enough to read them? What are the odds that anyone cares that “ebd changed a char* to a int* on 4/15/04”?

So, I’ve stopped viewing comments as obligatory. I write them now with a sort of “when in Rome” sentiment if others do it on the code I’m working on. After all, I have plenty of practice with it. But my real preference, in light of my focus on abstractions, is now to have the attitude that I am forbidden from writing comments. If I want to make my code usable and understandable, I have to do it with tight abstractions, self-documenting code and making bad decisions impossible. Is that a lofty goal? Sure. But I think it’s a good one. I’ve gone from viewing comments as obligatory to viewing them as indicative of small design failures, and I’m content with that. I think that all along part of me viewed commenting my methods as redundant and I’ve finally rid myself of the cognitive dissonance.

Erik, I think there’s a place for comments. Coding is essentially one of communication. Would you prefer the following DRY code: int question = Convert.ToInt32(Request.Form[“q”].Split(“.”)[0].Substring(1)); int section = Convert.ToInt32(Request.Form[“q”].Split(“.”)[1].Substring(1)); or the commented one? // example of “q” – Q35.S47. All inputs previously validated in ValInputs() int question = Convert.ToInt32(Request.Form[“q”].Split(“.”)[0].Substring(1)); int section = Convert.ToInt32(Request.Form[“q”].Split(“.”)[1].Substring(1)); Comments communicates intentions, assumptions. If you were doing maintenance programming, a deftly placed comment can save a lot of hurt stepping through code. Code is never bug free and comments tell us what was intended and it helps when trying to figure out whether a piece… Read more »

Hi Chui – thanks for reading and for the comment. I suppose if I had to pick one of those or the other, I would choose the one with the comment, but truth be told, if I ran across either version, I’d delete it and re-write it. This is kind of a larger theme of writing self-documenting code and thus not needing comments. Code that’s littered with Law of Demeter violations, mystery literals and confusing nested semantics is the root problem. Comments are simply cologne one sprays on to cover the code smell. Why not: var myFormProcessor = new FormProcessor(Request.Form)… Read more »

[…] a journal of edits in that header as if there were no such thing as source control. As I mentioned in an earlier post, I’ve since drifted further and further from this approach to the point of almost never […]

[…] what this method is actually doing. If I’m going to put my money where my mouth is in my various posts deriding comments, I need to do better when it comes to making code […]

[…] is to create so-called standards that amount to codifying and mandating busy-work. I’ve made my evolving opinion of comments in code quite clear on a few occasions, and I consider them to be an excellent example. […]

[…] not only a best practice, but also table stakes for basic caring and being good at your craft. As I’ve previously explained, I used to be one of those people. I viewed writing comments in the same way that I view shutting […]

One place comments help me out is in documenting external API expectations. For example, fields in an SSO cookie may change, or change order. If I have some comments showing what I expected, when the API is updated, I can correlate the old fields to the new ones.

This of course may be something I’m reinforcing as valuable since I recently encountered it – or in this case, didn’t encounter. I definitely agree that comments should be reduced in scope and quantity and their presence should be evaluated before use.

I think the subject definitely gets more nuanced when it comes to public facing APIs. My strong preference (when consuming them) is examples with accompanying explanation, but having method doc comments, when possible, is certainly better than nothing.